The 2025 Foundation Model Transparency Index

2025 AI Transparency Backslide: Average Score Falls to 40, IBM Takes the Crown, xAI and Midjourney Bring Up the Rear

As AI models evolve at a staggering pace, we seem to know very little about how these “black boxes” actually work. The latest 2025 Foundation Model Transparency Index (FMTI) released by Stanford University and other institutions reveals a worrying trend: despite the rapid advancement of AI technology, the industry’s overall transparency is deteriorating sharply.

ArXiv URL:http://arxiv.org/abs/2512.10169v1

This heavyweight annual report not only gave a “checkup” to long-established giants like OpenAI and Google, but also included Chinese companies such as Alibaba and DeepSeek in the evaluation for the first time. The results were astonishing: the average score plunged from 58 last year to 40, even lower than in 2023.

The “Winter” of Transparency: Who Is Exposed, and Who Is Leading?

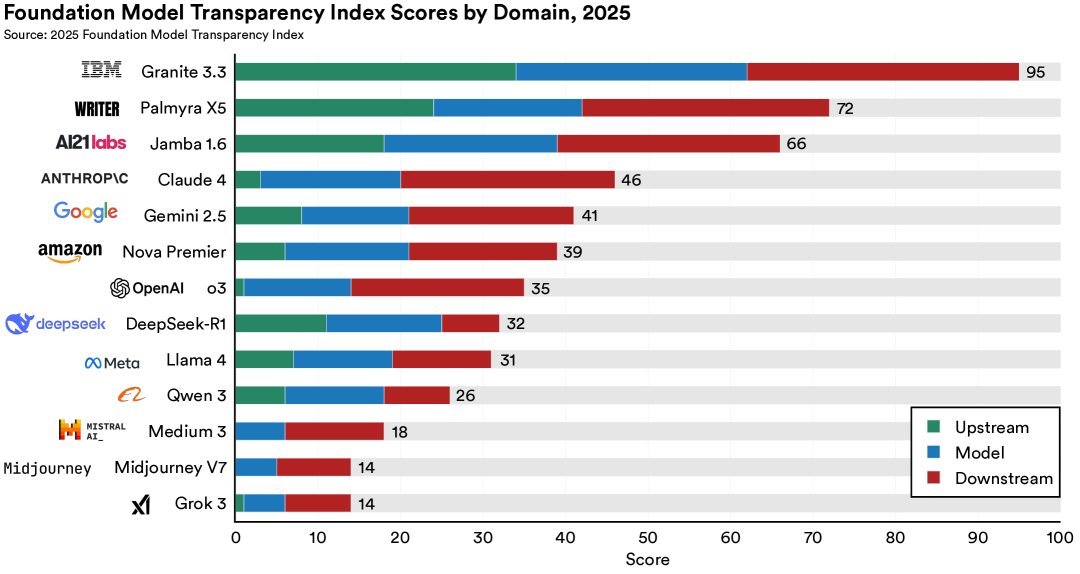

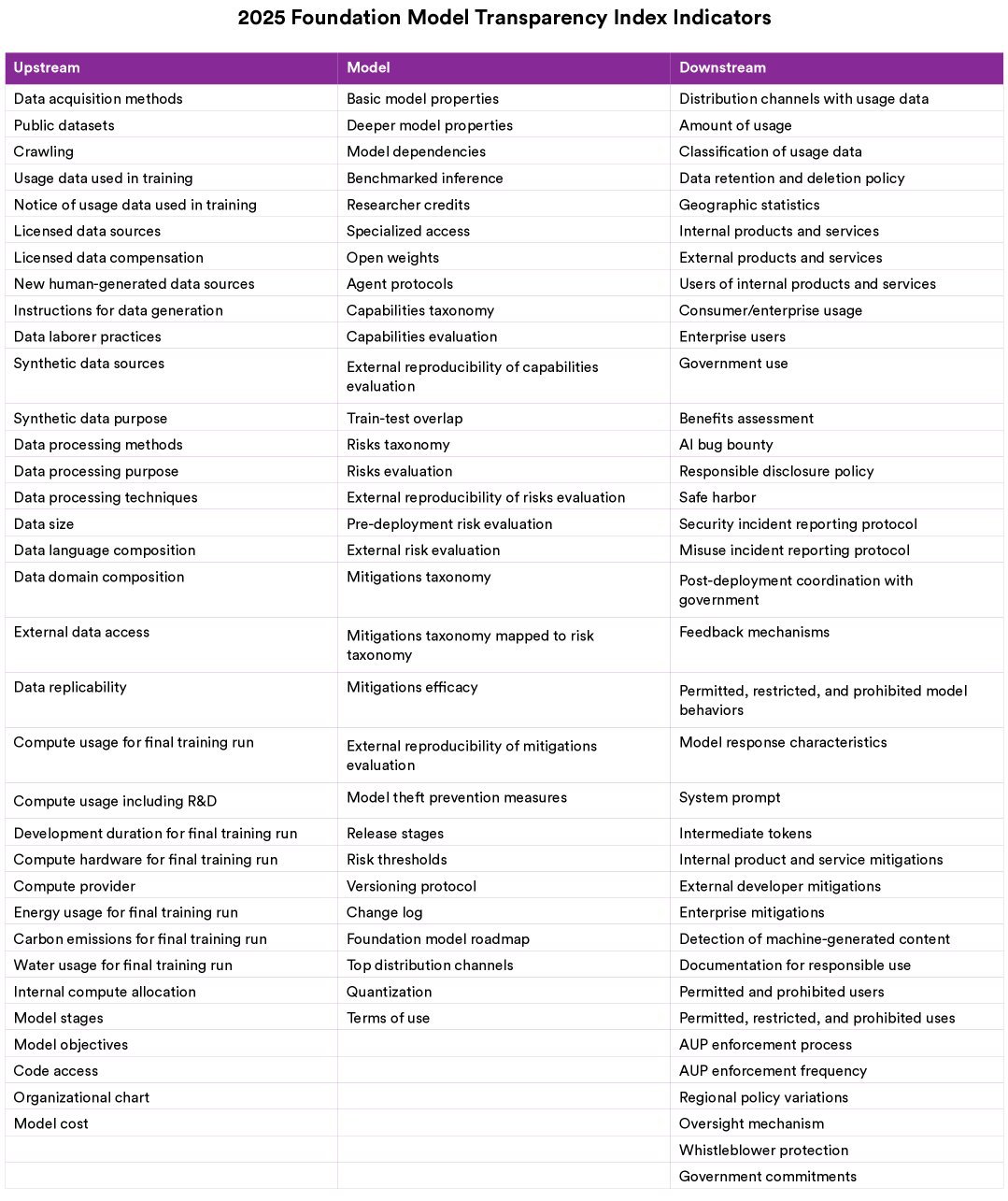

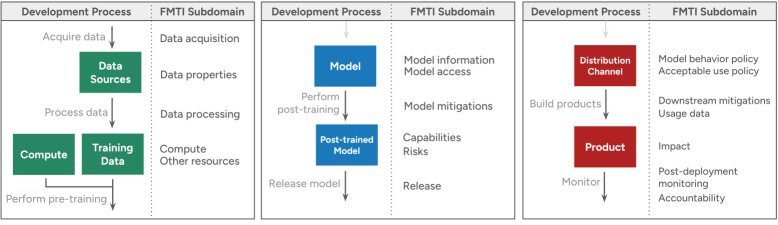

This year’s FMTI report evaluated 13 of the world’s top foundation model developers. The research team designed an assessment framework with 100 indicators, covering the entire process from upstream data and model construction to downstream impact.

The stark contrast between the winners and the laggards:

-

Champion (IBM): IBM stood far ahead of the pack with a high score of 95/100, becoming the absolute benchmark for transparency. It provided full disclosure in many areas where other companies remain tight-lipped, such as data sources and compute resources.

-

At the bottom (xAI & Midjourney): xAI, owned by Elon Musk, and image-generation giant Midjourney scored only 14 points, placing them in an extremely opaque position.

-

The “middling” giants: Members of the “Frontier Model Forum,” including OpenAI, Google, Anthropic, Amazon, and Meta, all clustered in the middle tier, with an average score of about 36. The report sharply notes that these companies seem to have reached a tacit understanding—avoiding reputational damage from scoring too low, while lacking the incentive to compete for the title of transparency leader.

The debut of Chinese companies:

Chinese companies evaluated for the first time this year showed mixed performance. Alibaba, DeepSeek, and others were included in the assessment. Although their overall scores were in the lower-middle range (DeepSeek, Meta, and Alibaba averaged 30 points), this marks a step toward a more complete global map of AI transparency evaluation.

The Truth Behind the Score Collapse: Stricter Standards and Deliberate Concealment

Why did the average score fall from 58 to 40 this year? It is not just because lower-scoring new companies were added; many established players also “regressed” on key metrics.

1. The “black-boxing” of core resources

Companies are most secretive about “upstream resources.” Training Data and Training Compute are the two biggest black holes.

-

Data sources: Almost no company is willing to disclose the specific sources and composition of its training data in detail, which is directly tied to copyright and bias issues.

-

Compute costs: Although the public is curious about the enormous cost of training large models, the exact number of $FLOPs$ used and the energy consumed are often treated as trade secrets. For example, AI21 Labs disclosed compute and carbon-emission data in 2024, but chose to withhold it in 2025.

2. A tougher upgrade to the evaluation standards

FMTI 2025 substantially revised its indicators in an effort to “separate the real from the fake.”

-

Rejecting vague descriptions: In the past, simply describing a model capability (such as “text generation”) was enough to earn points. Now, companies must list the “capabilities specifically optimized during post-training” to score.

-

Emphasizing reproducibility: It is no longer enough to claim that a model scores highly on a benchmark. To earn points, companies must open-source the code and prompts, proving that third parties can reproduce the result.

Technical Breakdown: How Is Transparency Quantified?

To measure transparency scientifically, the research team divided the 100 indicators into three core areas:

- Upstream: Focuses on the resources needed to build the model.

-

Data: Data sources, copyright, licensing, PII (personally identifiable information) handling.

-

Labor: Salaries and working conditions of data annotators.

-

Compute: Hardware details, energy consumption.

- Model: Focuses on the model’s own properties and release.

-

Architecture: Parameter count, number of layers, etc. (many companies now refuse to discuss this).

-

Capabilities and risks: What the model can and cannot do, and potential safety risks.

- Downstream: Focuses on the model’s use and impact.

-

Distribution: Who is using the model?

-

Impact: The real-world effects on users, affected groups, and the environment.

An Interesting Finding: Can AI Agents Replace Human Evaluators?

During this year’s evaluation, the research team ran an interesting experiment: using AI Agents to help collect transparency information from each company.

The results showed that AI Agents can indeed improve the efficiency of information retrieval, but they are still far from fully replacing humans. Agents are prone to hallucination or being misled by surface-level information (false positives), and they can also miss key details buried in technical documents (false negatives). In the end, all information still had to be manually verified by the FMTI team.

Conclusion: Transparency Is a Choice, Not a Technical Problem

The most important takeaway from the 2025 FMTI report is that differences in transparency are driven mainly by corporate willingness, not by technical or structural barriers.

The high scores of IBM, Writer, and AI21 Labs prove that even commercial companies can achieve a high degree of transparency while remaining competitive. By contrast, some companies score extremely high on downstream application policies (such as download terms of use) but score zero on model training data; this sharp contrast reveals their strategic opacity.

As global policymakers, such as those behind the EU AI Act, begin to mandate certain types of transparency, this report is not only a record of the current state of affairs, but also a guide to future policy intervention. If market competition cannot deliver transparency, then more aggressive policy intervention may become inevitable.