Opal: An Operator Algebra View of RLHF

-

ArXiv URL: http://arxiv.org/abs/2509.11298v1

-

Author:

-

Publishing Organization: Microsoft

TL;DR

This paper proposes Opal, an operator-algebraic view of reinforcement learning from human feedback (RLHF), and GKPO, a standardized exchange pattern. Through a reduction law that holds if and only if three core assumptions are satisfied, it unifies, compares, and verifies different RLHF objective functions, thereby organizing the field’s sprawling methods.

Key Definitions

The paper introduces or adopts the following concepts, which are essential for understanding its core ideas:

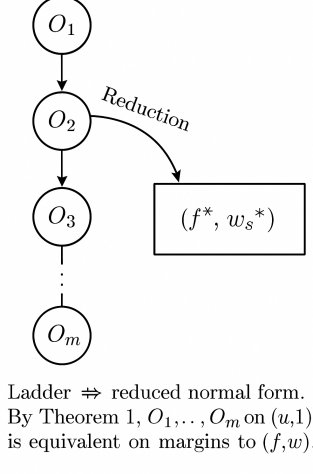

- Ladder: A way of formalizing RLHF objective functions, described as a sequence of primitive operators acting on a base score \($u\)$.

- Primitive Operators: The basic building blocks of a “ladder,” mainly of two types:

- Additive penalties \($\mathcal{A}[\lambda, \phi]\)$: shift the utility function, in the form \($f \mapsto f - \lambda\phi\)$.

- Multiplicative pairwise weights \($\mathcal{M}[\omega]\)$: scale pairwise utility differences (margins), in the form \($W \mapsto W \cdot \omega\)$.

- It also includes a reference adjustment \($ \mathcal{R}[\Delta\_{\mathrm{ref}}]\)$.

- Reducible Class ($\mathcal{R}$): The set of ladder structures that can be losslessly “folded” or reduced into a unified normal form. A ladder belongs to this class if and only if it satisfies three conditions: (1) the reference is fixed; (2) the penalties are additive; (3) the weights are independent of the intermediate utility difference.

- Normal Form: The final simplified form of a reducible ladder, whose pairwise margin can be written as \($M = (\Delta f^{\ast} - \Delta\_{\mathrm{ref}}) w^{\ast}\)$, where \($f^{\ast}\)$ is the sum of all additive penalties and \($w^{\ast}\)$ is the product of all multiplicative weights.

- GKPO (Generalized Kernel Preference Object): A standardized JSON schema for representing any pairwise RLHF objective. For methods in the reducible class, GKPO can normalize them into the Opal normal form and generate a deterministic hash; for non-reducible methods, it explicitly marks the failed assumptions and provides a “witness.”

Related Work

At present, the field of reinforcement learning from human feedback (RLHF) is filled with a wide variety of methods, such as PPO-RLHF, Direct Preference Optimization (DPO), ranking-based methods (RRHF), Offset Regularized Optimization (ORPO), and others, forming a sprawling “zoo of methods.”

This proliferation of methods raises a fundamental question: are these seemingly different objective functions truly different in essence, or are they merely different algebraic combinations of the same underlying operators? This confusion makes it extremely difficult to fairly compare methods, reproduce results, and understand their fundamental differences.

This paper aims to address this problem by introducing a unified operator-algebra framework (Opal) and a standardized representation schema (GKPO) to systematically classify and compare existing RLHF objective functions, clarifying their equivalence relationships or essential differences.

Method

The core contribution of this paper is the proposal of an operator-algebra framework called Opal, and, based on it, the design of GKPO, a standardized exchange pattern for RLHF objectives.

Opal: An Operator-Algebra View

The Opal framework models RLHF objectives as “operator ladders” acting on the base utility pair \($ (u, 1)\)$. The canonical margin of a ladder \($L\)$ is defined as:

\[M_{L}(x;y^{+},y^{-}) = \bigl{(}\Delta f(x;y^{+},y^{-})-\Delta_{\mathrm{ref}}(x;y^{+},y^{-})\bigr{)}\cdot W(x,y^{+},y^{-})\]where \($f\)$ is the transformed utility, \($W\)$ is the pairwise weight, and \($\Delta\_{\mathrm{ref}}\)$ is the reference adjustment.

Primitive Operators and Ladders

A ladder is composed of the following three primitive operators:

- Additive penalties \($\mathcal{A}[\lambda,\phi]\)$: adjust the utility via \($f \mapsto f-\lambda\phi\)$.

- Multiplicative weights \($\mathcal{M}[\omega]\)$: adjust the weight via \($W \mapsto W\cdot\omega\)$.

- Reference adjustment \($\mathcal{R}[\Delta\_{\mathrm{ref}}]\)$: directly modify the reference term \($\Delta\_{\mathrm{ref}}\)$.

Because additive operators and multiplicative operators satisfy commutativity and associativity, respectively, any ladder can be represented in a “collected” form:

\[f = u-\sum_{i\in I}\lambda_{i}\phi_{i},\qquad W = \prod_{j\in J}\omega_{j}\]Innovation: Reducibility and Normal Form

The most important theoretical contribution of this paper is the proposal of a Reduction Law:

An operator ladder \($L\)$ can be reduced to a normal form \($M\_{L} \equiv (\Delta f^{\ast}-\Delta\_{\mathrm{ref}}) w^{\ast}\)$ if and only if the following three assumptions hold:

- Fixed Reference: \($\Delta\_{\mathrm{ref}}\)$ is constant across all prompts.

- Additive Penalties: penalty terms can be linearly added to the utility function \($f\)$.

- Score-independent Weights: the multiplicative weight \($w\)$ does not depend on the intermediate utility difference \($\Delta f\)$.

When these assumptions do not hold, the method becomes non-reducible. The paper provides explicit, finite “witnesses” for each failure mode, i.e., concrete counterexamples proving non-reducibility:

- Reference shift: when \($\Delta\_{\mathrm{ref}}\)$ varies with the prompt, no single fixed normal form can match all decisions.

- Non-additive gates: when penalties have gating logic (such as \($\mathbf{1}\{\phi\_1=0\}\phi\_2\)$), they cannot be represented by a single non-negative additive surrogate.

- Score-dependent weights: when weights are functions of \($\Delta f\)$, the order of operator application changes the final decision, so no utility-independent \($w^{\ast}\)$ exists.

GKPO: A Standardized Exchange Pattern

Building on the theory of Opal, the paper designs GKPO (Generalized Kernel Preference Object), a concrete, executable JSON schema for RLHF objectives.

Advantages

GKPO’s design has the following core advantages:

- Unified representation: GKPO provides a unified, method-agnostic representation for all pairwise RLHF objectives. This makes it straightforward to compare the configurations of different methods. Its JSON schema includes key components such as utility, weights, reference, loss function, penalty terms, and more.

- Automatic canonicalization and hashing:

- For RLHF methods that satisfy the reducibility conditions, GKPO can automatically canonicalize them into the Opal normal form.

- By deterministically serializing the canonicalized JSON and applying SHA-256 hashing, an Opal hash is generated. This hash is unique for all algebraically equivalent objective functions, providing a powerful tool for method reproduction and verification.

- Explicit failure diagnosis: When a method is irreducible, GKPO does not attempt to force a conversion. Instead, it explicitly marks \(inside_R: false\) in the \(reducibility\) field and indicates the reason for failure (such as \(reference_shift\)), while also providing a minimal “witness” to prove it. This makes the method’s underlying assumptions and limitations transparent.

- Inter-method converter: GKPO acts as an “exchange layer” between methods. As long as a method is reducible, inter-method conversion can be achieved through the path \($X \to \text{GKPO} \to Y\)$, while preserving its margins and decisions (within the range of positive scaling).

GKPO Example

A minimal GKPO example of a DPO method is shown below: ``\(json { "version": "gkpo-1.0", "score": { "type": "logpi" }, "weight": { "form": "constant", "constant": 1.0 }, "reference": { "form": "fixed_scalar", "value": 0.10 }, "link": "identity", "loss": "logistic", "beta": 1.0, "penalties": [], "provenance": { "method": "DPO", "citations": ["rafailov2023direct"] }, "reducibility": { "inside_R": true, "reasons": [], "witness": {} } }\)`$$

Experimental conclusions

This paper does not conduct large-scale benchmarking; instead, it validates the effectiveness of the Opal algebra and the GKPO schema through a series of carefully designed “demonstrations” and “stress tests.”

- Feasibility validation: Through concrete toy examples, mainstream methods such as DPO and RRHF are successfully represented as GKPO instances. For example, RRHF’s ranking penalty is expressed as the \(penalties\) term in GKPO, demonstrating its expressive power within the reducible class.

- Equivalence validation: It is shown that, under the reducibility assumptions, conversion between different methods is feasible. For instance, under fixed reference and additive penalty conditions, RRHF can be reduced to a DPO-equivalent form. Likewise, PPO-RM (with fixed reference) is also proven reducible to the DPO form, and GKPO makes this equivalence explicit.

- Irreducibility validation: Three “stress tests” (SHIFT/GATE/SCORE) clearly demonstrate three failure modes:

- SHIFT (reference shift): Two prompts with the same original utility difference but different reference shifts are constructed, resulting in opposite final margin signs, proving that a fixed normal form cannot match both simultaneously.

- GATE (non-additive gating): A gated penalty example is constructed, proving that no equivalent non-negative additive surrogate can be found.

- SCORE (utility-dependent weights): It is shown that when weights depend on the utility difference, the order of operator application changes the sign of the final decision, thereby proving irreducibility.

Final conclusion: These demonstrations and tests strongly support the paper’s core argument. The Opal framework and the GKPO schema can effectively classify, compare, and transform existing RLHF methods. For reducible methods, GKPO reveals their algebraic equivalence; for irreducible methods, it clearly identifies where their core assumptions break down, providing great clarity and rigor for understanding and reproducing RLHF research.

The table below summarizes the method classification from the Opal perspective:

| Method | Reducibility | Key difference (Delta) in GKPO representation |

|---|---|---|

| PPO-RM | Depends | KL anchor as an additive penalty on \($f\)$. Reducible if the reference is fixed. |

| DPO | Yes | The normal form itself. \($f=u, w=1\)$, with reference \($\log \pi\_{\text{ref}}\)$. |

| RRHF | Depends | Ranking penalty as an additive penalty on \($f\)$. Reducible if the penalty is linear. |

| ORPO | Depends | Offset as the reference term \($\Delta\_{\text{ref}}\)$. Reducible if the reference is fixed. |

| KTO / GRPO | Depends | Variance-shaping term can be used as a multiplicative weight \($w\)$. Reducible if \($w\)$ is independent of \($\Delta f\)$. |

| f-DPO / VAR | No | The reference term $$$\Delta_{\text{ref}}$` varies with the prompt (reference shift). |

| RLAIF / CAI | Depends | Dataset-level operator. Reducible if additive/multiplicative. |